Spotting the AI-Native Engineer

There is a widening chasm between teams that have truly built AI into how they ship and teams that are merely using Copilot as autocomplete. The difference is not incremental. It is orders of magnitude. And it is already showing up in the data.

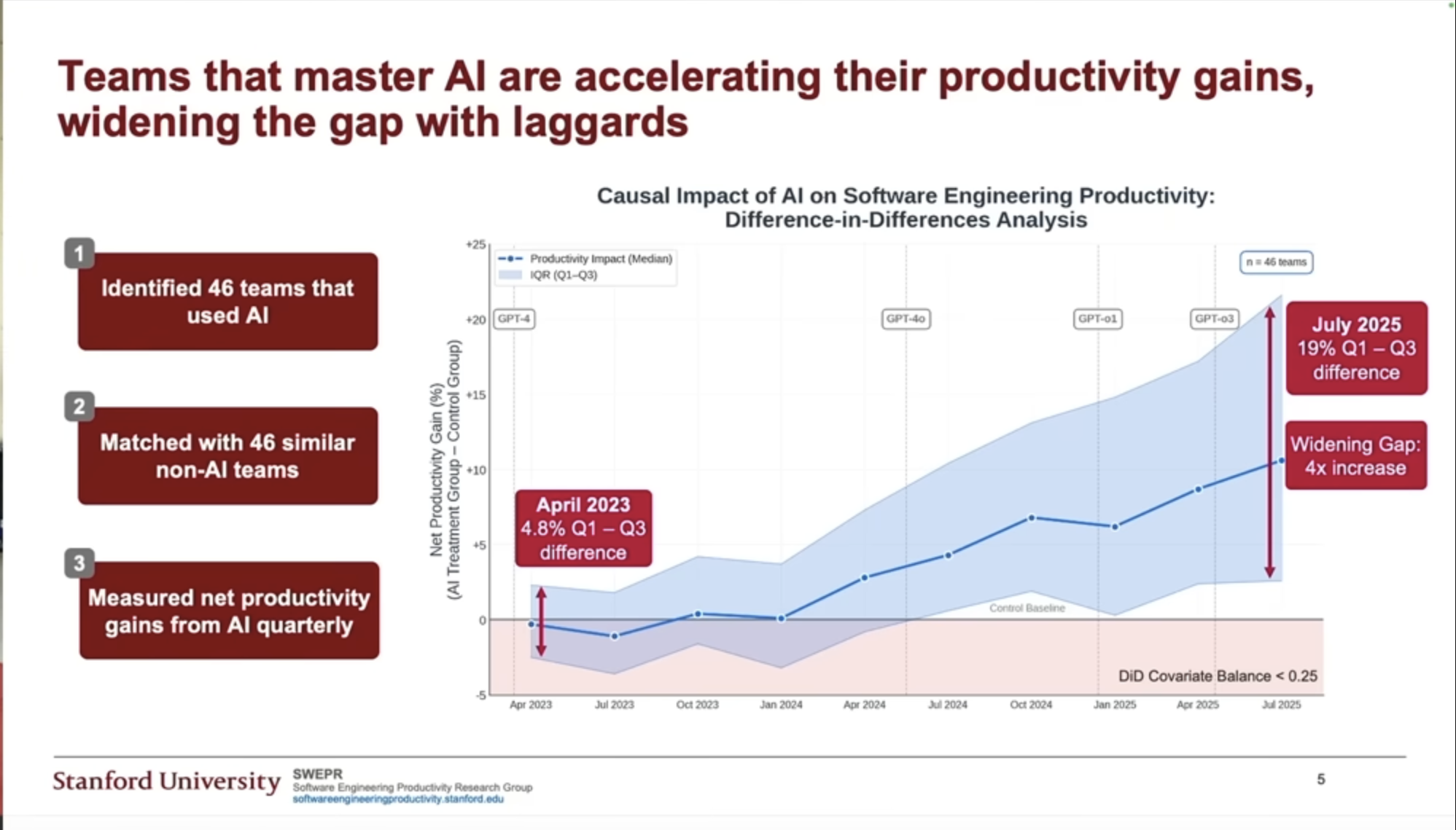

Stanford HAI research confirms what many of us are feeling anecdotally. In some teams, AI shows up as a marginal improvement — roughly 2% gains when it is used as occasional autocomplete. In teams that have restructured how they work around AI, gains reach 20% on similar tasks.

But the real story is not the difference in percentages. It is the rate of divergence. Since 2023, the productivity gap between low-AI and AI-native workflows has widened roughly fourfold, and the curve is still accelerating. That is not a talent advantage. That is a structural one. Every quarter you staff with the wrong profile, you are not standing still — you are falling behind relative to every competitor who got this right before you.

The engineers who will define your next two years are already on the market. Your current screening process almost certainly cannot find them.

The Two Archetypes

Most hiring frameworks assume the answer is one of two things: the seasoned senior engineer with decades of hard-won instinct, or the sharp junior who grew up on ChatGPT and moves fast. Both assumptions are incomplete. The top 1% today arrives from two opposite directions, and understanding the mix is the whole game.

The first archetype is the seasoned engineer who refuses to stand still. Not the one coasting on institutional knowledge — the one who treats every new model release like a tool drop, absorbs it immediately, and asks: how does this change how I work? They bring years of judgment about systems, failure modes, and trade-offs. But crucially, they are plugging that foundation into the latest capabilities fast enough to extract maximum leverage. Experience is the multiplier. Velocity is the engine.

The second archetype is the junior who treats AI as a direct line into the collective intelligence of the best engineers who ever lived. They are not just autocompleting syntax. They are learning architecture patterns, debugging strategies, and system design intuitions in real time — from something that has read essentially everything. They arrive at statistically sound designs not because they already knew the answer, but because they knew how to extract it.

What makes both archetypes elite is the same underlying trait: they are optimizing for acceleration, not for their current state.

This reframes the entire hiring question. You are not trying to assess where someone is. You are trying to assess how fast they are moving — and whether that rate is compounding. Current knowledge is a lagging indicator. In an era where the tools rewrite themselves every few months, what someone knew last year is nearly irrelevant. What matters is how fast they are integrating what dropped last week.

The signal is not the resume. It is the trajectory. And most hiring processes are completely blind to it.

The Signals Live in Motion

The true signals today live almost entirely in how an engineer interacts with AI in real time. Not the output. The interaction.

For the seasoned archetype, watch for clarity of direction from the very first prompt. Are they specifying problems precisely before touching the tools? Can they orchestrate multiple agents concurrently while holding a consistent design vision across the whole horizon? The senior AI-native engineer does not just use the model — they manage it. You see it in how they course-correct, when they override, and how they decompose a complex problem before a single line of code is written.

For the junior archetype, the signal is different but equally legible. Watch for the quality of their learning loop with the model — are they using it as a peer mentor, extracting and verifying knowledge before committing to a design path? The best junior AI-native engineers run fast, efficient rounds of research and validation before executing. They arrive at sound designs not through prior experience but through disciplined extraction.

This second trait — the ability to rapidly learn and extract knowledge from AI — is arguably the more durable signal of the two. As models grow more capable, as harnesses improve, as context windows widen, the engineer who knows how to get the most out of what lives inside these models will keep compounding. That skill does not expire. It appreciates.

Seeing What Resumes Cannot

Since the signals live in interactions, the methodology follows directly: you need to see the candidate working with AI tooling in real time, on a problem sophisticated enough to stress-test their judgment. Not a toy problem. Not a sanitized take-home. A live session with enough complexity that the candidate has to make real decisions — about when to delegate, what to spec first, how to sequence the work.

While they are in motion, interrupt them. Ask how they think the AI is doing. Ask why they chose to run that step concurrently rather than sequentially. Ask when they would stop trusting the output. These interjections reveal more in thirty seconds than a resume reveals in thirty minutes.

A second strong signal is the master plan. Given a non-trivial problem, how fast does the candidate arrive at a sound, well-structured plan — and how good is their judgment about that plan? Not just the plan itself, but what they flag as fragile, what they would pressure-test before executing, where they think the model's confidence should be discounted. A candidate who produces a fast plan and knows exactly where it might break is showing you something real.

Then there are the technical probes that separate genuine fluency from surface familiarity:

- Do they understand how these models actually work under the hood — not just how to prompt them?

- Can they manage context efficiently and know the limits of each model?

- Do they know which harness to reach for on which class of problem?

- Can they extract maximum leverage across the full stack — from raw model to tooling to orchestration?

This is the actual evaluation surface — not the resume, not the Leetcode score, not the skills checklist. The engineer you are looking for reveals themselves in motion.

Most hiring processes are not built to see that. And the cost is not a missed hire — it is a structural deficit that compounds every quarter you fail to close it.